- Home

- Services

- About

- News

- Contact

- Diagnosa disentri

- Zemax opticstudio crack download

- Convert quicken 2014 to quicken 2016 for mac

- Adobe lightroom crack version

- Get to disk utility macbook g4

- Persona 3 movie 1 english sub download

- Old screensavers swirl rainbow mac

- Best black friday deals 2015 on tvs

- Opera pms system afmin

- Rolex oyster perpetual datejust 41 prix

- Unreal engine 4 shift left click not working windows 10

- Free download slicer program for 3d printer

- The sims 4 custom content high waisted jeans

- Battlefield 2 pc 2005 download

- Studio fix mac nc45

- #Unreal engine 4 shift left click not working windows 10 how to

- #Unreal engine 4 shift left click not working windows 10 full

- #Unreal engine 4 shift left click not working windows 10 code

- #Unreal engine 4 shift left click not working windows 10 free

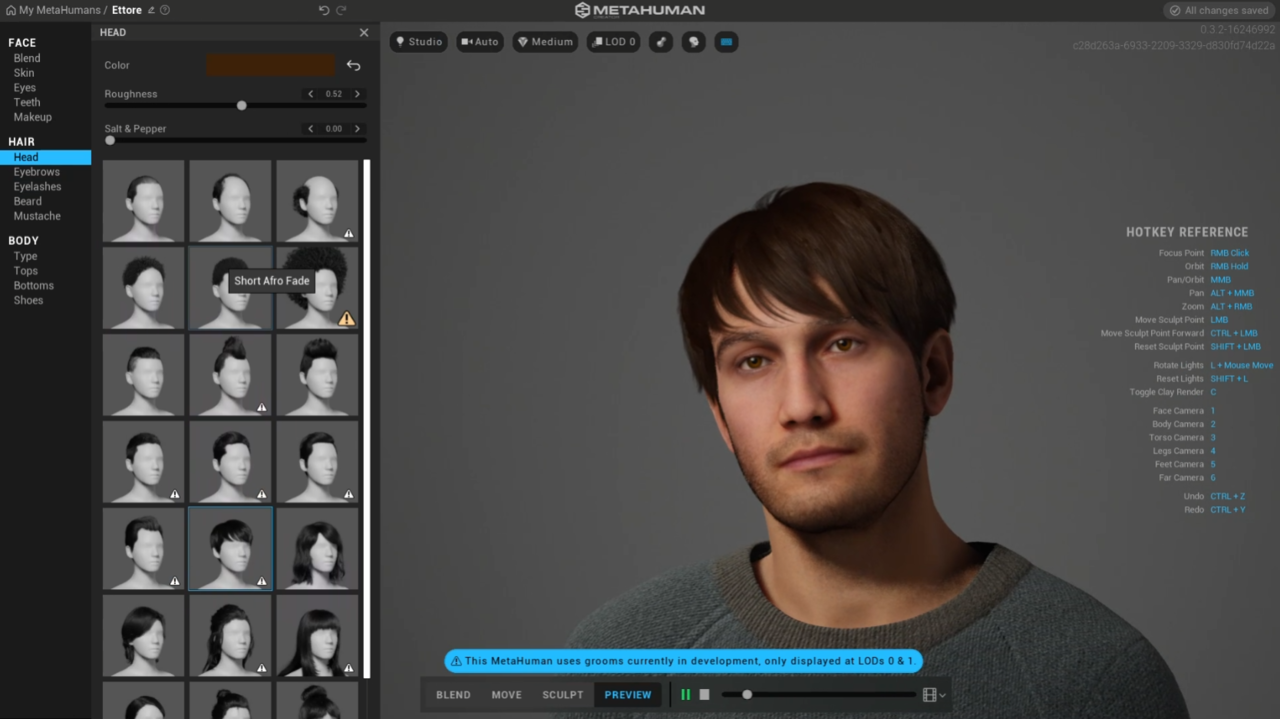

You should now see your device listed as a subject. In the Unreal Editor, open the Live Link panel by selecting Window > Live Link from the main menu. See also the Working with Multiple Users section below.įor details on all the other settings available for the Live Link Face app, see the sections below. If you need to broadcast your animations to multiple Unreal Editor instances, you can enter multiple IP addresses here. You'll typically need to do this in a third-party rigging and animation tool, such as Autodesk Maya, then import the character into Unreal Engine.įor a list of the blend shapes your character will need to support, see the Apple ARKit documentation. You need to have a character set up with a set of blend shapes that match the facial blend shapes produced by ARKit's facial recognition. You'll need to have an iOS device that supports ARKit and depth API.įollow the instructions in this section to set up your Unreal Engine Project, connect the Live Link Face app, and apply the data being recorded by the app to a 3D character.Įnable the following Plugins for your Project:

You'll have best results if you're already familiar with the following material: The material on this page refers to several different tools and functional areas of Unreal Engine.

#Unreal engine 4 shift left click not working windows 10 how to

This page explains how to use the Live Link Face app to apply live performances on to the face of a 3D character, and how to make the resulting facial capture system work in the context of a full-scale production shoot.

#Unreal engine 4 shift left click not working windows 10 free

If your iOS device contains a depth camera and ARKit capabilities, you can use the free Live Link Face app from Epic Games to drive complex facial animations on 3D characters inside Unreal Engine, recording them live on your phone and in the engine.

#Unreal engine 4 shift left click not working windows 10 full

I can provide the full log if needed when I’m back at my device, but this is the only error and the warnings are related to pretty common issues (bad engine version warnings, etc) so I doubt they are relevant.Recent models of the Apple iPhone and iPad offer sophisticated facial recognition and motion tracking capabilities that distinguish the position, topology, and movements of over 50 specific muscles in a user's face.

#Unreal engine 4 shift left click not working windows 10 code

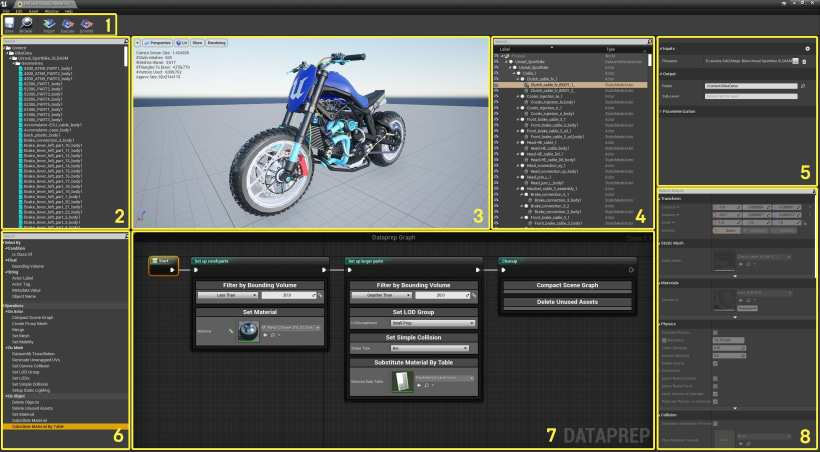

I planned on re-writing the prototyping code in C++ anyway, but this was marketed as a way to save a bunch of time so I tried it out. That seems to be the problem array according to the log, but I have no idea how to fix this in blueprints. shift left click will swing a shield like a sword), so I held an array of enums to determine what action match to the modifier state when input is sent. My game supports weapons that have alternate inputs based on modifier keys (i.e. PackagingResults: Error: FEmitHelper::LiteralTerm cannot parse array value "NewEnumerator0" error: class: /Temp/_TEMP_BP_/Game/SpaceSim/Addons/Inventory/Core/Holdable/BP_BaseHoldable.BP_BaseHoldable_C UATHelper: Packaging (Windows (64-bit)): LogK2Compiler: Error: FEmitHelper::LiteralTerm cannot parse array value "NewEnumerator0" error: class: /Temp/_TEMP_BP_/Game/SpaceSim/Addons/Inventory/Core/Holdable/BP_BaseHoldable.BP_BaseHoldable_C Here is the last bit from the log right before the error was thrown. I was doing an inclusive build and can switch to exclusive if needed, but I’d like to figure out how to fix this if I can. I was running an nativized build for my game and ran into this error.

- Home

- Services

- About

- News

- Contact

- Diagnosa disentri

- Zemax opticstudio crack download

- Convert quicken 2014 to quicken 2016 for mac

- Adobe lightroom crack version

- Get to disk utility macbook g4

- Persona 3 movie 1 english sub download

- Old screensavers swirl rainbow mac

- Best black friday deals 2015 on tvs

- Opera pms system afmin

- Rolex oyster perpetual datejust 41 prix

- Unreal engine 4 shift left click not working windows 10

- Free download slicer program for 3d printer

- The sims 4 custom content high waisted jeans

- Battlefield 2 pc 2005 download

- Studio fix mac nc45